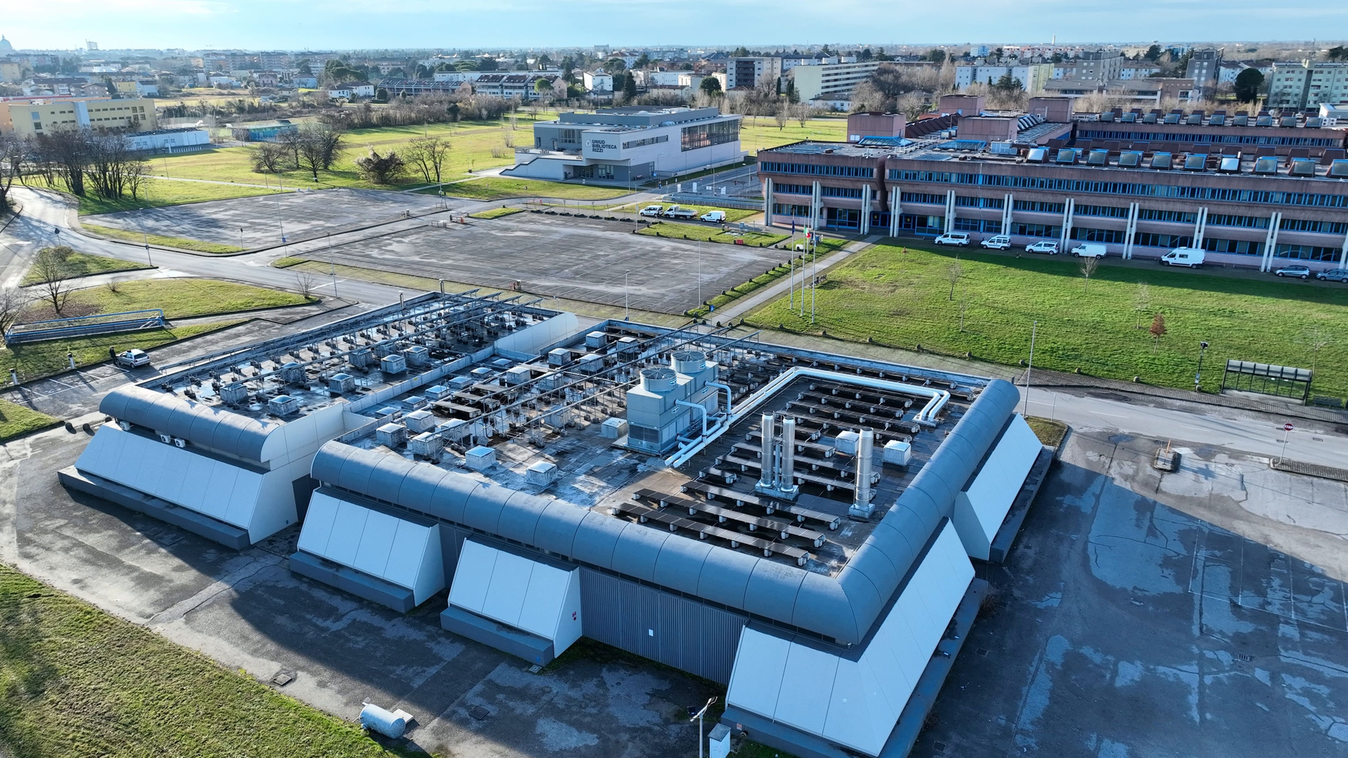

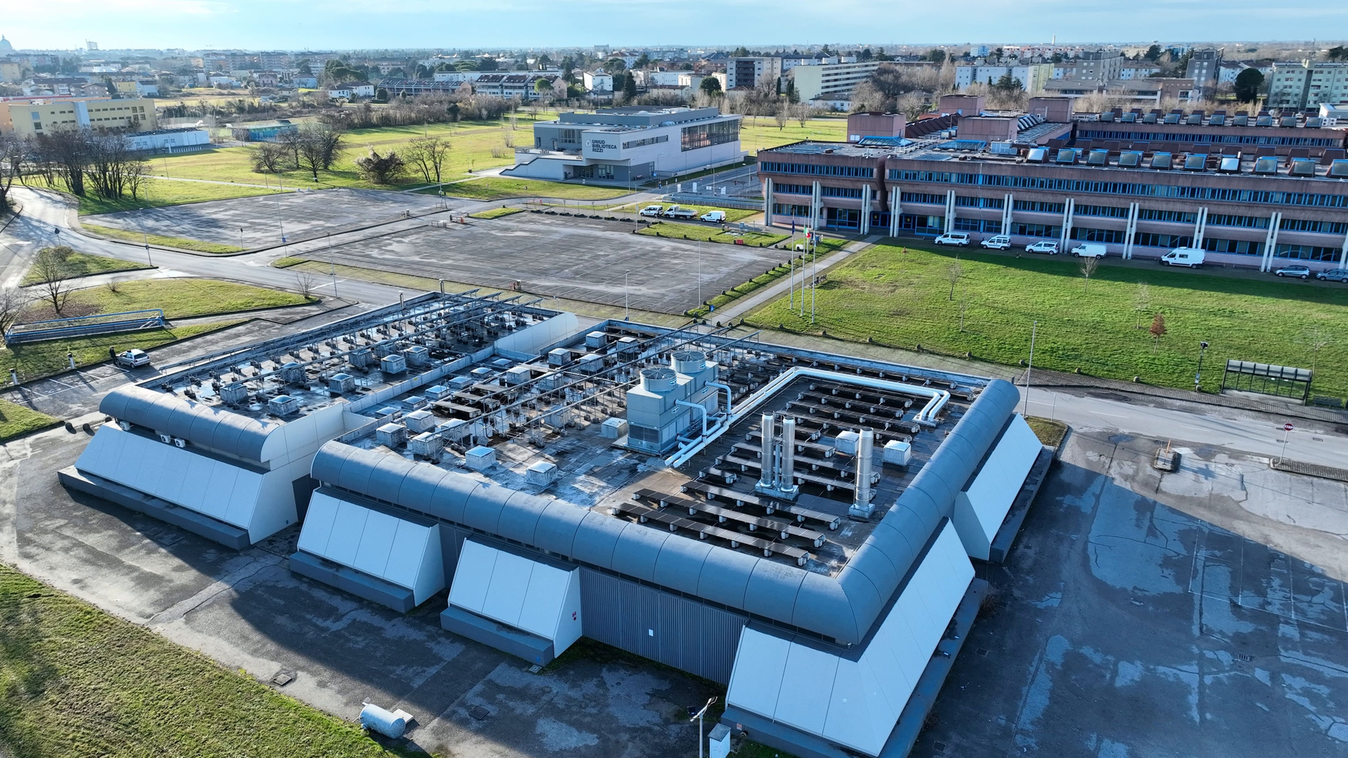

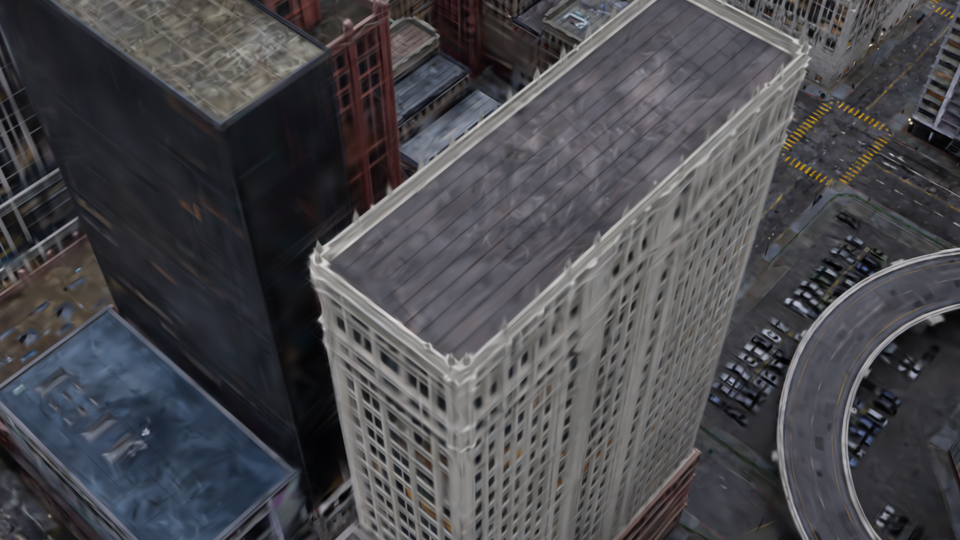

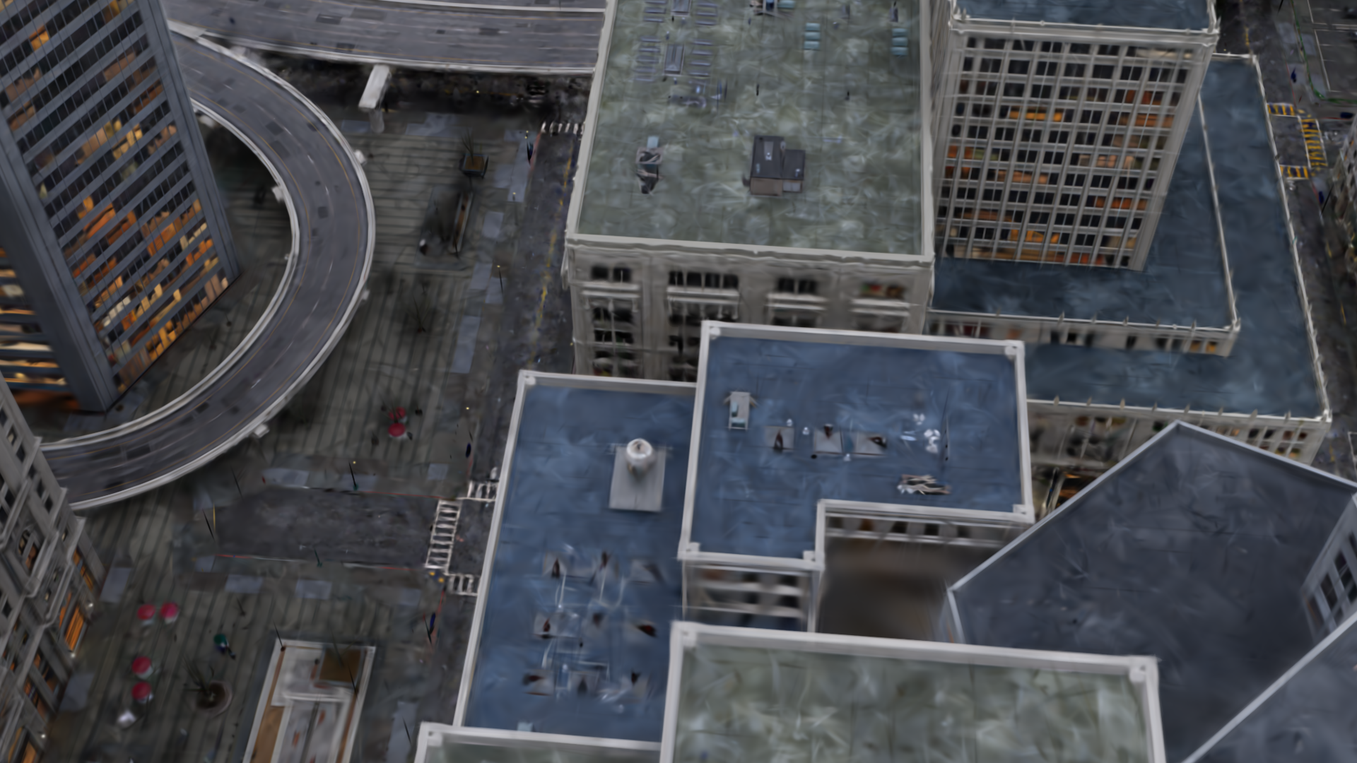

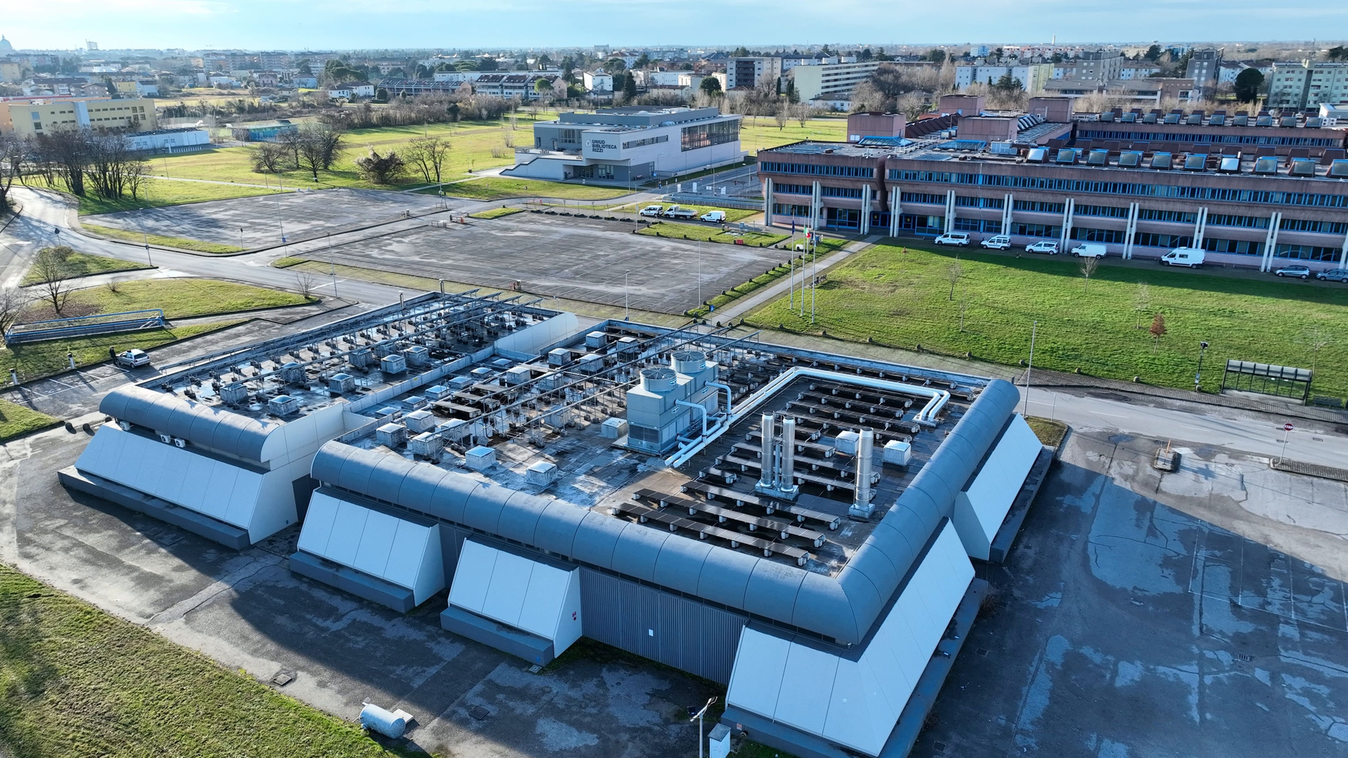

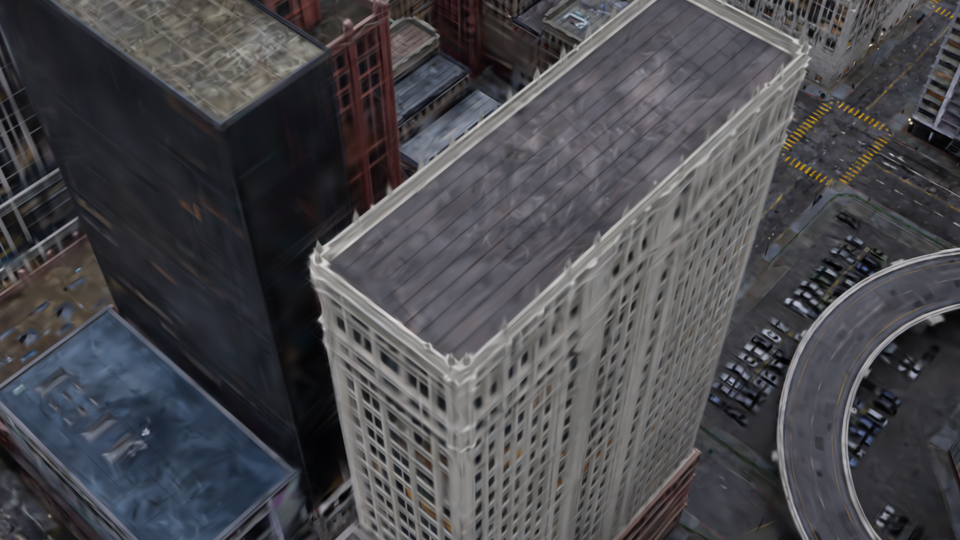

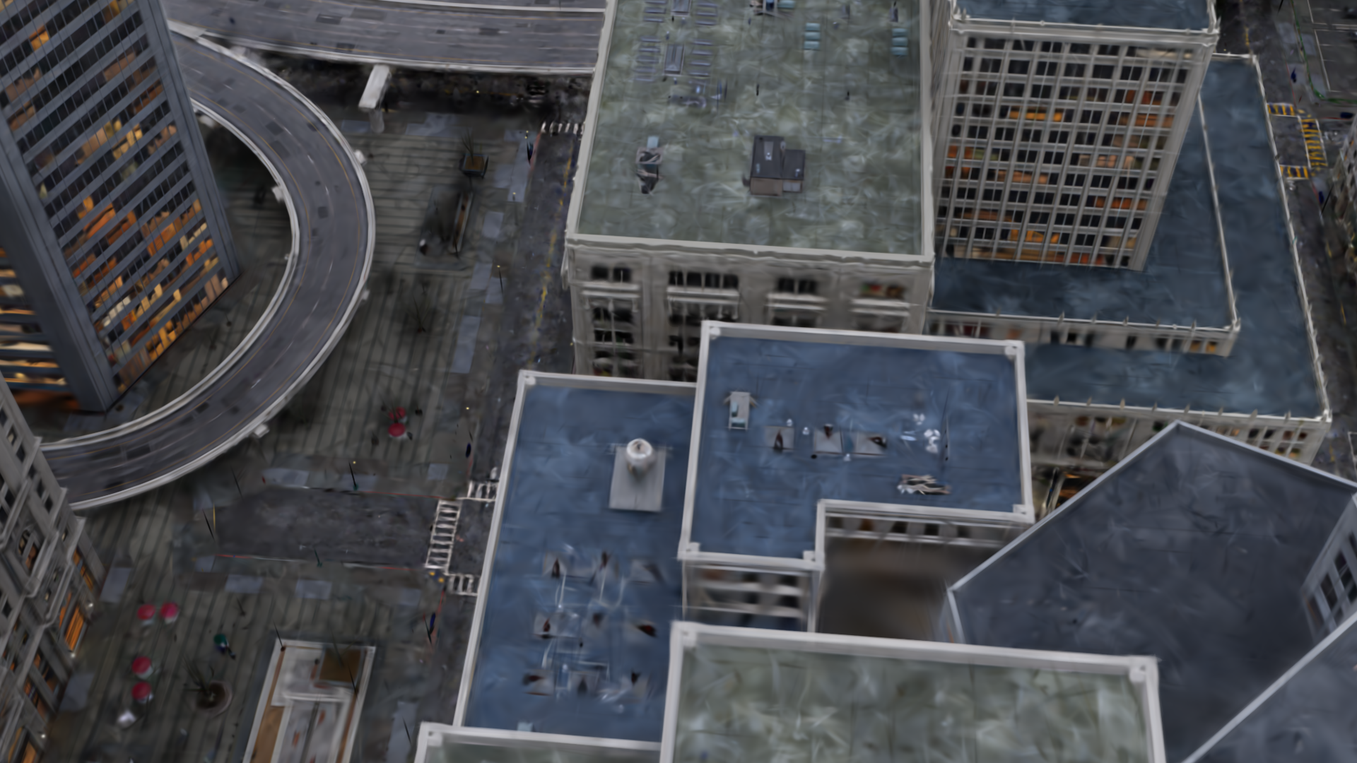

Gaussian Splatting has emerged as a high-performance technique for novel view synthesis, enabling real-time rendering and high-quality reconstruction of small scenes. However, scaling to larger environments has so far relied on partitioning the scene into chunks—a strategy that introduces artifacts at chunk boundaries, complicates training across varying scales, and is poorly suited to unstructured scenarios such as city-scale flyovers combined with street-level views. Moreover, rendering remains fundamentally limited by GPU memory, as all visible chunks must reside in VRAM simultaneously. We introduce A LoD of Gaussians, a framework for training and rendering ultra-large-scale Gaussian scenes on a single consumer-grade GPU—without partitioning. Our method stores the full scene out-of-core (e.g., in CPU mem- ory) and trains a Level-of-Detail (LoD) representation directly, dynamically streaming only the relevant Gaussians. A hybrid data structure combining Gaussian hierarchies with Sequential Point Trees enables efficient, view- dependent LoD selection, while a lightweight caching and view scheduling system exploits temporal coherence to support real-time streaming and rendering. Together, these innovations enable seamless multi-scale recon- struction and interactive visualization of complex scenes—from broad aerial views to fine-grained ground-level details.

Supported by a robust coarse-to-fine densification strategy, we are the first approach to train the entire scene directly on the LoD representation. A smooth Gaussian Hierarchy in combination with Sequential Point Trees allows us to find the right level of detail at blazing speeds.

In addition to level of detail, visibility culling reduces memory demand during training by efficiently streaming new content to the GPU.

The combination of all these techniques allows us to render giant scenes on modest hardware - at interactive framerates.

We provide further qualitative comparisons with related work here. Click on the toggle button to expand the comparison gallery.

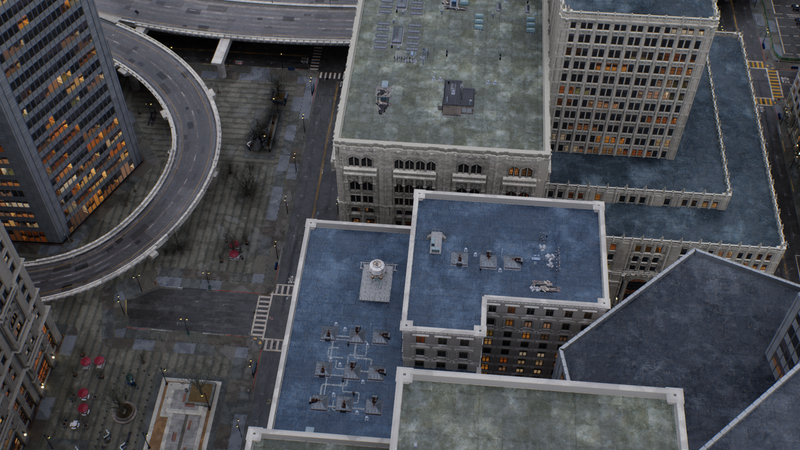

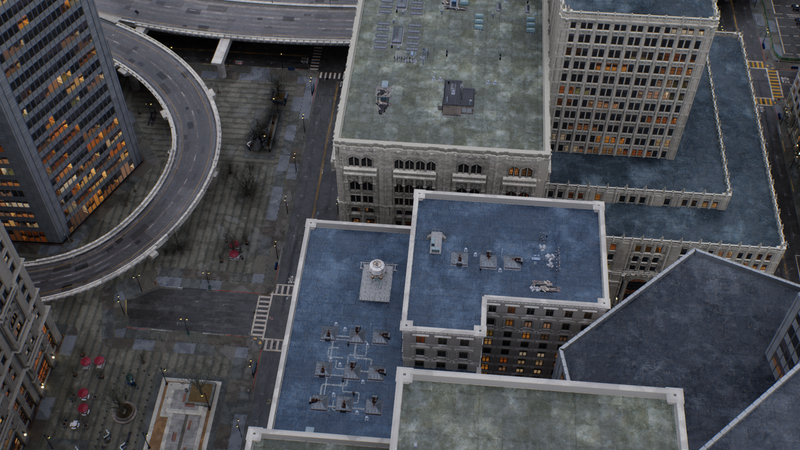

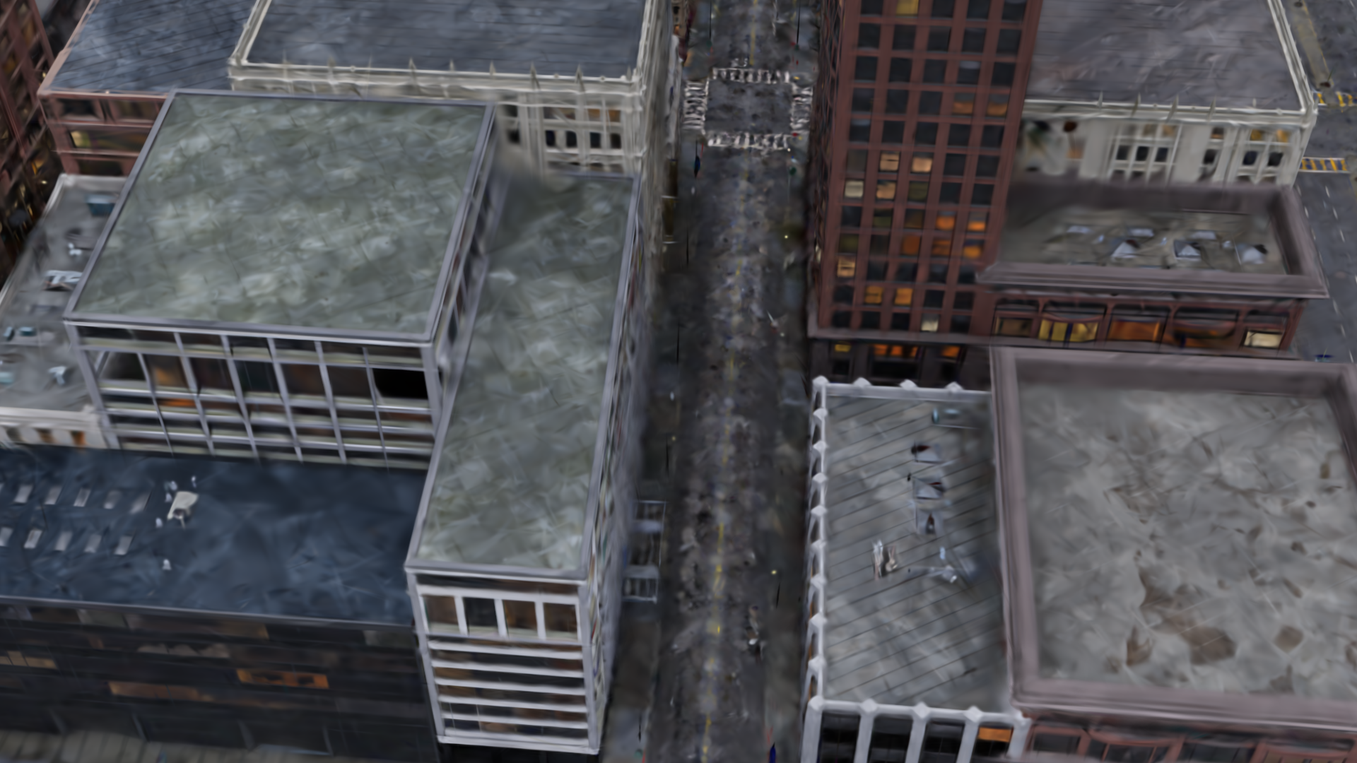

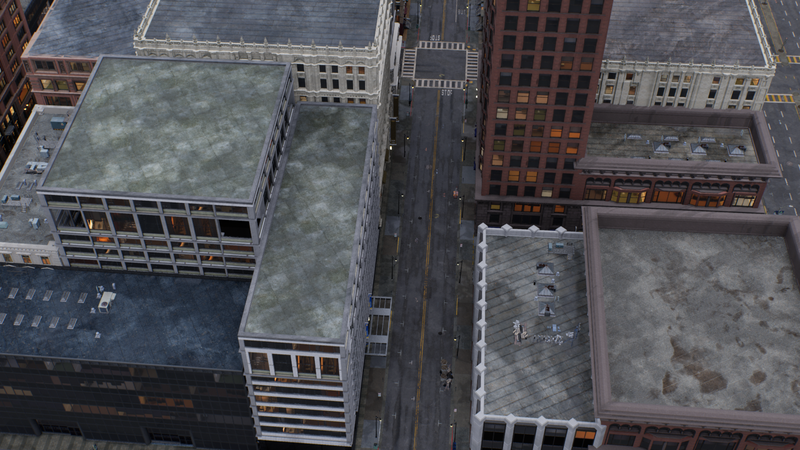

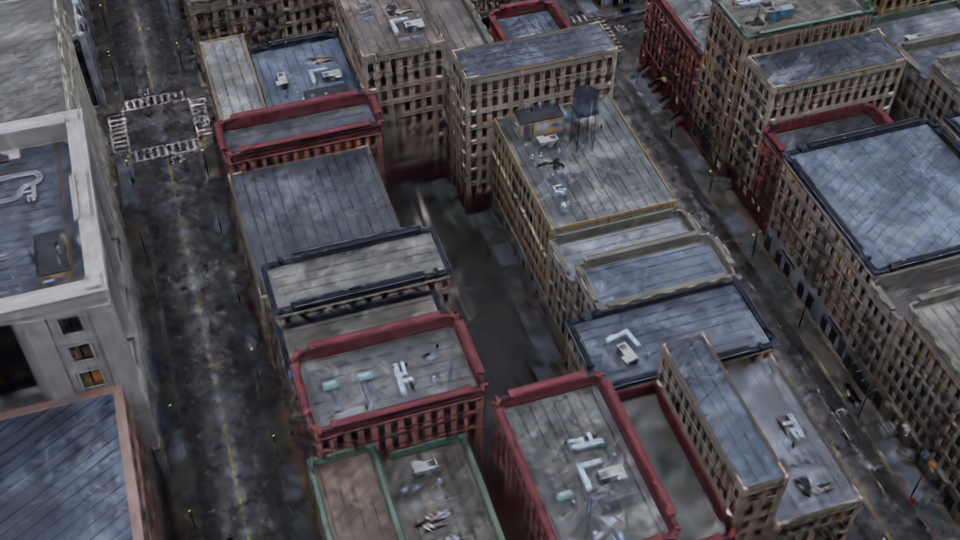

CityGS is mainly optimized for aerial datasets, resulting in blurry reconstructions when other perspectives are introduced.

CLM trains the entire scene using unified memory management, but does not adequately adapt the training process to enable ultra-large-scale reconstruction.

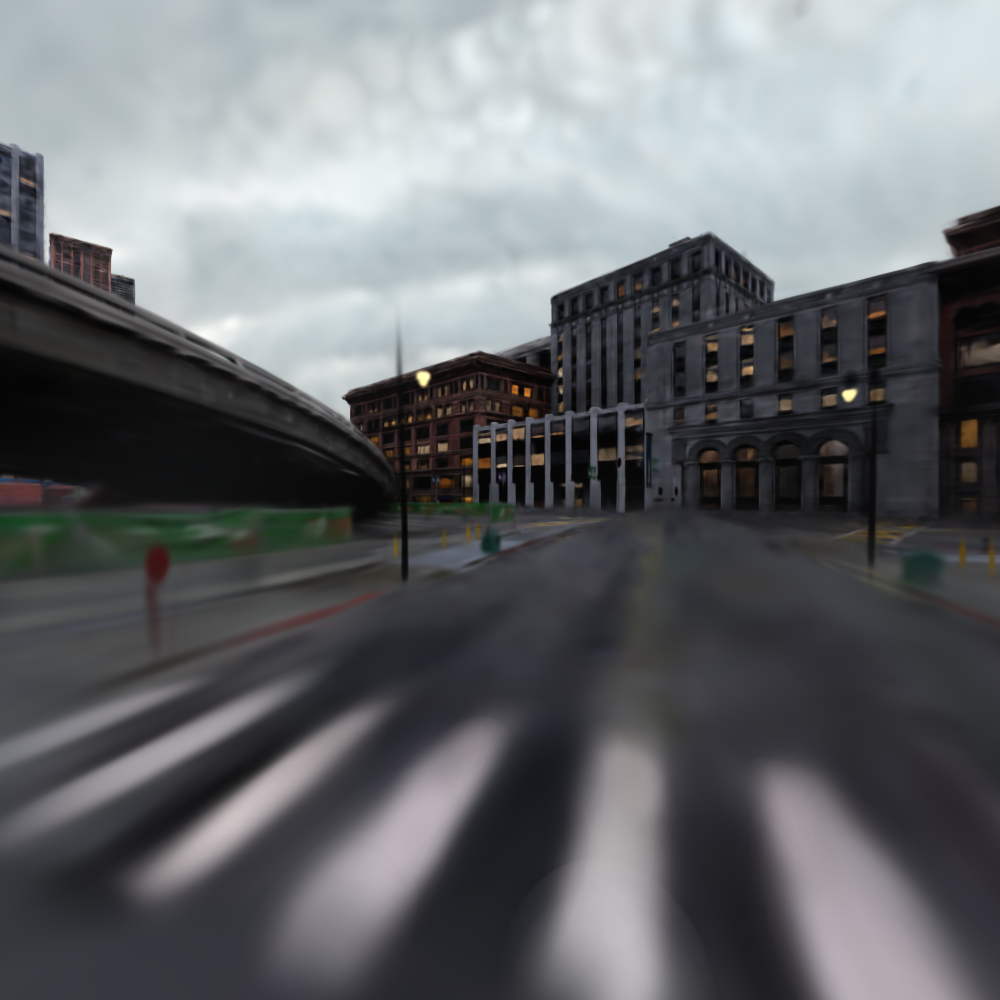

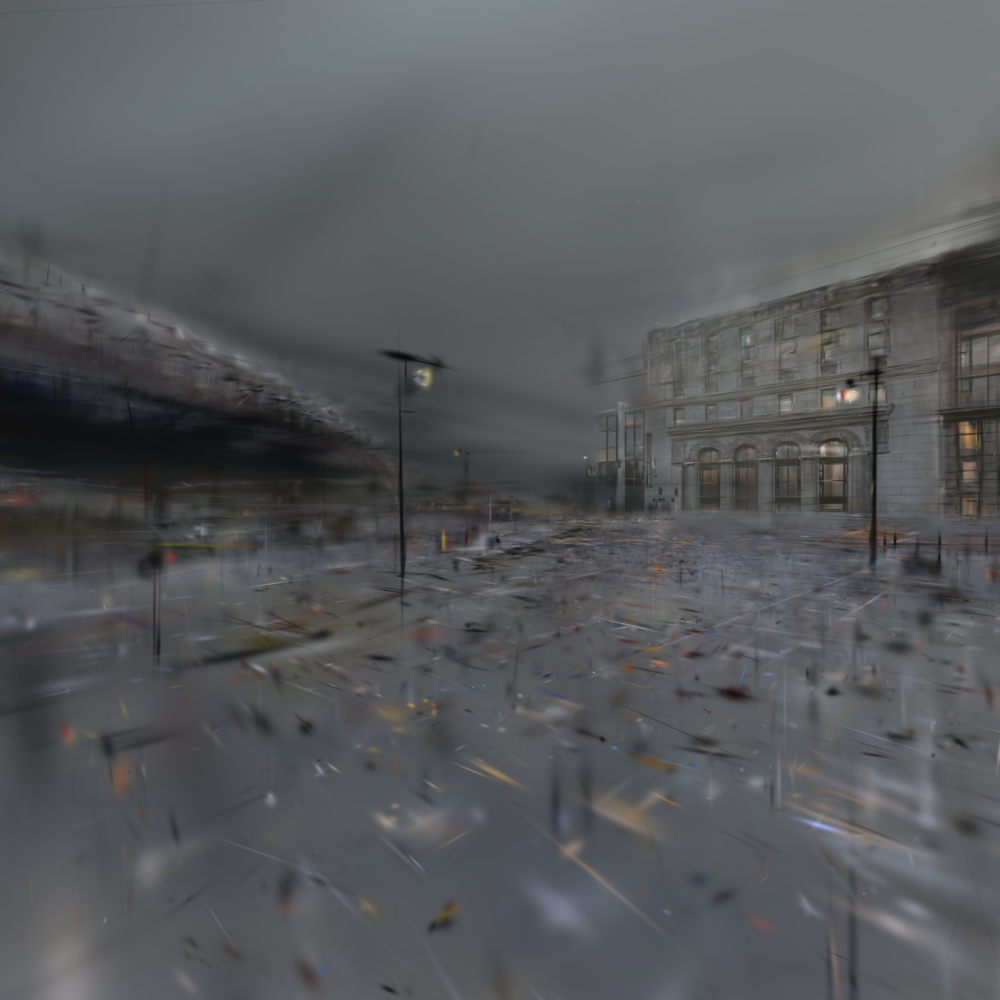

Hierarchical-3DGS suffers from chunking artifacts such as bleeding and ghosting when views at multiple scales are introduced.

.png)

.png)

.png)

While HorizonGS is designed for training at different scales, it fails to adequately address the challenges of ultra-large-scale reconstruction.

Despite providing an LoD system, OctreeGS is unable to synthesize high-fidelity details on large-scale scenes.

If you find our work useful, consider using a citation.

@inproceedings{windisch2026ALoDOfGaussians,

author = {Windisch, Felix and K{\"o}hler, Thomas and Radl, Lukas and D'Urso, Mattia and Steiner, Michael and Schmalstieg, Dieter and Steinberger, Markus},

title = {{A LoD of Gaussians: Unified Training and Rendering for Ultra-Large-Scale Reconstruction with External Memory}},

booktitle = {Proceedings of ACM SIGGRAPH 2026},

series = {SIGGRAPH '26},

year = {2026},

location = {Los Angeles, CA, USA},

publisher = {ACM},

address = {New York, NY, USA},

numpages = {9},

doi = {10.1145/3799902.3811076}

}